Risk Management

Security Risk Management

Table of Contents

- Security Risk Management

- Introduction

- Terms and definitions

- Purposes and Principles

- Risk Management Approaches

- Risk assessment

- Automation - Common practices and opportunities

- References

Introduction

Risk management is crucial for ensuring information security within an organisation: its implementation is regulated by international standards and practices describing it as an iterative process composed by:

-

the definition of a plan and an approach;

-

a phase of investigation of the different factors involved in creating the risk;

-

a phase dedicated to its evaluation mainly following two lines: qualitative analysis and quantitative analysis;

-

a risk response phase where adequate countermeasures are applied to the risks according to their priority;

-

a phase of recording and monitoring the risks thanks to a dedicate register that is the starting point of the next process iteration,

all these phases are paired with a collateral but important activity of communication and awareness of the risk among the whole organisation.

All the declination of the Risk management process are affected by a weak point: all the assumptions and calculations done are an approximation of a very complex entity: the risk, an entity composed by many unpredictables factors that are unknown in most of the phases of the process, all the different approaches and methods are aimed to reduce at the minimum this approximation working both on experiential factors (qualitative approaches) and mathematical theories (quantitative approaches).

Terms and definitions

| Term | Definition |

|---|---|

| Risk | Effect of uncertainty on the objectives |

| Risk management | Coordinated activities to direct and control an organisation with regard to risk |

| Stakeholder | Person or organisation that can affect, be affected by, or perceive themselves to be affected by a decision or activity |

| Risk Source | Element which alone or in combination has the potential to give rise to risk |

| Event | Occurrence or change of a particular set of circumstances |

| Consequence or Impact | Outcome of an event affecting objectives |

| Likelihood or Probability | Chance of something happening |

| Controls – Countermeasure | Measure that contains and/or modifies risk |

| Risk Owner | The person who coordinates efforts to mitigate and manage the risk with other individuals who own parts of the risk |

| Asset | Anything that has value to the organisation, its business operations and their continuity, including information resources that support the organisation’s mission |

| Threat | Any circumstance or event with the potential to adversely impact an asset, e.g., through unauthorised access, destruction, disclosure, modification of data, and/or denial of service |

| Risk Statement | The negative impact on an asset in case a threat occurs |

| Security Control | Safeguards or countermeasures to avoid, detect, counteract, or minimize security risks |

| Information Security Risk | Impacts to an organisation and its stakeholders that could occur due to threats and vulnerabilities associated with the operation and use of information systems and their environments |

| Cyber Security Risk | Risk related to cyber security. Examples of threats include cyber attacks or data breaches |

| Vulnerability | A weakness, design flaw, or implementation error that can lead to an unexpected event compromising the security of a system, network, application, or protocol |

Purposes and Principles

The main purpose of risk management is the creation and protection of value within an organisation: risk management is the identification, evaluation, and prioritisation of risk, followed by coordinated and economical application of resources to minimise, monitor, and control the probability or consequences of unfortunate events or to maximise the realisation of opportunities.

Regardless the specific sector or field of application a prioritisation process is always implemented in order to tackle the risk that can imply the greater consequences and the greatest likelihood of happening as priority action.

Even if the aim could be quite clear to understand it's a fact the risk does not imply a direct and immediate income to organisation but has a direct and immediate cost to be sustained: the perception of the importance of managing risks that can lead, in the future, to a greater loss in the business is not always well understood at decision making level: one of the most important factors of risk management for this reason is to minimise the spending (manpower, time, resources etc) and at the same time minimise the negative effects of risks on the organisation.

Risk Management Approaches

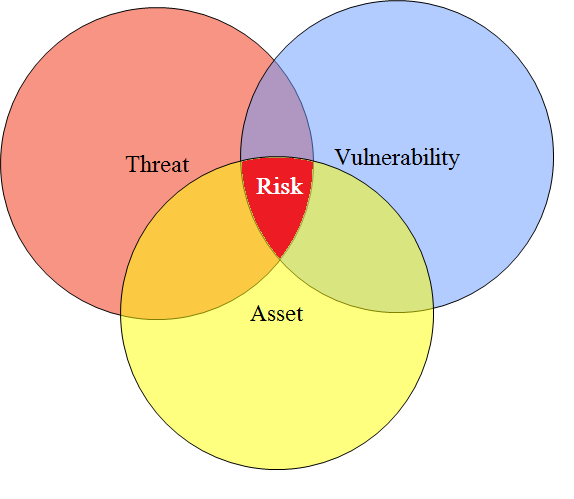

Risk within an organisation is defined as the potential that a given threat will exploit vulnerabilities of an asset or group of assets to cause loss or damage. This concept is the core of any Risk Management approach:

-

a threat is a potential cause of an unpredictable event which could cause a harm or loss for an organisation;

-

a vulnerability is a weakness, which is susceptible to be exploited by a threat and could be caused by a human mistake, a weak point or flaws in technology any other unexpected behavior or state.

The threat – vulnerability pair leads to an event that is characterised by a likelihood that can be estimated or measured, being de facto the probability that a vulnerability will be exploited by a threat and that generates an impact, i.e. the other quantity contributing to the risk.

Risk management is the iterative process that contributes to identify risks, assess the consequences to the business and the likelihood of the occurrence, prioritise the risk to be treated and the actions to be undertaken to reduce it, keeping track of the current status for each iteration and monitoring its evolution, using the aggregated data to promote risk awareness within the organisation and embed the risk management process at all the levels of the hierarchy.

Industrial standards

Industry standards are a set of criteria within an industry relating to the standard functioning and carrying out of operations in their respective fields of business. In other words it is the generally accepted requirements followed by the members of an industry. In the specific case of Risk Frameworks what happened is that a series of stakeholders, belonging mainly to the anglo-saxons culture, started the formalisation of risk management practices gathered to create a unified and common system for their practices, then other different bodies created as well their internal standards that have been adopted.

ISO3100 and ISO27005

ISO 31000 is an international standard published in 2009 and updated in 2018 that provides only principles and guidelines for effective risk management, outlining a generic approach to risk management that can be used in any field and by any type of organisation. The standard provides a uniform vocabulary and concepts for discussing risk management, along with guidelines and principles on how to structure a Risk Management process that can help to undertake a critical review of your organisation’s risk management process.

The standard does not provide detailed instructions or requirements on how to manage specific risks, nor any advice related to a specific application domain; it remains at a generic level just underlining some aspects such as the importance of defining objectives before attempting to control risks and emphasising the role of uncertainty on the goals, introduces the risk appetite, i.e. the level of risk which the organisation accepts to take on in return for expected value and then reinforce the idea that risk management has to be part of the strategic decision-making plan.

The specific framework for Risk Management is cyclical process composed by:

-

Context, Criteria and Scope definition - I.e. the boundaries of the Risk Management,

-

Risk Assessment phases - Divided in:

-

Risk Identification

-

Risk Analysis

-

Risk Evaluation

-

-

Risk Treatment

Then, as side activities:

-

Communication and Consultation of the Risk Management results

-

Monitoring and Reviewing of the scopes, context and methodologies

-

Recording and Reporting of all the evidences, classifications and documents used

The ISO27005 is the adaptation of the more generic ISO31000 to the specific domain of Information Security Risk Management, providing a continual process consisting of a sequence of activities, some of which are iterative:

-

Establish the risk management context (e.g.the scope, compliance obligations, approaches/methods to be used and relevant policies and criteria such as the organisation’s risk tolerance or appetite);

-

Quantitatively or qualitatively assess (i.e.identify, analyse and evaluate) relevant information risks, taking into account the information assets, threats, existing controls and vulnerabilities to determine the likelihood of incidents or incident scenarios, and the predicted business consequences if they were to occur, to determine a ‘level of risk’;

-

Treat (i.e.modify using information security controls, retain or accept, avoid and/or share with third parties) the risks appropriately, using those levels of risk to prioritise them;

-

Keep stakeholders informed throughout the process;

-

Monitor and review risks, risk treatments, obligations and criteria on an ongoing basis, identifying and responding appropriately to significant changes.

Open Foundation

The Fair (Factor Analysis of Information Risk) Framework, proposed by the Open Group, is meant to be an improvement and more detailed framework that it's a stand-alone activity but can be nested in the ISO27005 process improving its weak point or too generic parts.

The FAIR framework goes into greater detail, providing some advantages such as the chance to go deep when necessary, to obtain a better understanding of factors contributing to risk with a breakdown structure of them and the ability to better troubleshoot analysis performed at higher layers of abstraction. This implies that usage of FAIR requires analysis at the deepest layers of granularity that would be impractical and not realistic for risk analysis. This approach is presented as flexible enough to be performed using data estimates at higher levels of abstraction, enabling the user to choose the appropriate level of analysis, based on the time, data, complexity and significance of the scenario. This model doesn't offer a simplified vision of a complex phoneme like risk, whereas in fact over-simplified models lead to false conclusions and recommendations.

The core elements of this nested framework are the Risk Taxonomy for risk identification and characterisation and a probabilistic approach for the calculation of the risk level based on Monte Carlo Simulations, these two elements are better defined in the relative paragraphs below.

Other approaches

Other approaches, more than emerging from formalised practices or industrial activities that have been uniformed into a repeatable format, have been designed or derived directly from national or international regulations and are focused not only on the assessment phase but also on being compliant on national laws. The following sections describe the most relevant ones without meaning to be a complete list.

OCTAVE

The Operationally Critical Threat, Asset, and Vulnerability EvaluationSM (OCTAVE®) approach defines a risk-based strategic assessment and planning technique for security.

OCTAVE is a self-directed approach, meaning that people from an organisation assume responsibility for setting the organisation’s security strategy. OCTAVE-S is a variation of the approach tailored to the limited means and unique constraints typically found in small organisations. OCTAVE-S can be led by a small, interdisciplinary team of an organisation’s personnel who gather and analyse information, producing a protection strategy and mitigation plans based on the organisation’s unique operational security risks. To conduct OCTAVE-S effectively, the team must have broad knowledge of the organisation’s business and security processes, so it will be able to conduct all activities by itself.

Octave Automated Tool has been implemented by Advanced Technology Institute (ATI) to help users with the implementation of the Octave and Octave-S approach. The tool assists the user during the data collection phase, organises collected information and finally produces the study reports.

CRAMM

CRAMM is a risk analysis method developed by the British government organisation CCTA (Central Communication and Telecommunication Agency), now renamed the Office of Government Commerce (OGC). A tool having the same name has been developed to support such method, whereas CRAMM is rather difficult to use without the CRAMM tool. The first releases of CRAMM (method and tool) were based on best practices of British government organisations. At present CRAMM is the UK government’s preferred risk analysis method, but is also used in many countries outside the UK and international organisations like NATO and Dutch armed forces. CRAMM is especially appropriate for large organisations, like government bodies and industry and is composed by three phases:

-

The establishment of the objectives for security

- By defining the boundary for the study for Risk Assessment, its assets and the value of data held, understanding in this way also the business impacts and the technical impacts on the involved system and softwares.

-

The assessment of the risks to the proposed system and the requirements for security

- By Identifying and assessing the type and level of threats and vulnerabilities that may affect the system and combining their values to obtain the risk level

-

Identification and selection of countermeasures that are meant to cover the risks calculated in Stage 2 and are selected on a predefined but very large set of countermeasures

NIST SP800-30 and AURUM

NIST SP800-30 is one of the Special Publication 800-series reports. It gives very detailed guidance and identification of what should be considered within a Risk Management and Risk Assessment in computer security. It is largely based on detailed checklists, graphics, flowcharts and mathematical formulas, as well as references and libraries of requisites, threats, vulnerabilities etc., that are mainly based on US regulatory issues and Cybersecurity ecosystem.

One of the framework derived directly from NIST is AURUM (AUtomated Risk and Utility Management), a complete explaination of the framwork and a case study example can be found in the articles: AURUM: A Framework for Information Security Risk Management and "Information Security Risk Management: In Which Security Solutions Is It Worth Investing?" of A. Ekelhart ; S. Fenz ; T. Neubauer.

EBIOS

EBIOS is a comprehensive set of guides that can be used through a tool dedicated to Information System risk managers. Originally developed by the French government, it is now supported by a club of experts of diverse origin that is active in maintaining the EBIOS guides. It produces best practices as well as application documents targeted to end-users in various contexts. EBIOS is widely used in the public as well as in the private sector, both in France and abroad. It is compliant with major IT security standards.

EBIOS gives risk managers a consistent and high-level approach to risks, It helps them acquire a global and coherent vision, is useful for support decision-making by top managers on global projects (business continuity plan, security master plan, security policy), as well as on more specific systems (electronic messaging, mobile networks or web applications for instance). EBIOS clarifies the dialogue between the project owner and project manager on security issues. In this way, it contributes to relevant communication with security stakeholders and spreads security awareness.

EBIOS approach consists of a cycle of 5 phases:

-

Phase 1 deals with context analysis in terms of global business process dependency on the information system (contribution to global stakes, accurate perimeter definition, relevant decomposition into information flows and functions).

-

Both the security needs analysis and threat analysis are conducted in phases 2 and 3 in a strong dichotomy, yielding an objective vision of their conflicting nature.

-

In phases 4 and 5, this conflict, once arbitrated through a traceable reasoning, yields an objective diagnostic on risks. The necessary and sufficient security objectives (and further security requirements) are then stated, proof of coverage is furnished, and residual risks made explicit.

Ebios is a software tool developed by Central Information Systems Security Division (France) in order to support the EBIOS method. The tool helps the user to produce all risk analysis and management steps according the five EBIOS phases method and allows all the study results to be recorded and the required summary documents to be produced, with the possibility to extend the various lists of threats and vulnerabilities.

Risk assessment

Risk Assessment is the phase of the general Risk Management process aimed to estimate and evaluate the risk within a defined context and determine its factors and causes, this output is used to define adequate countermeasures to control or reduce the risk, this methodology can be applied to any field or level, from high strategical decisions to a small project, the methodology has very different practical declinations but is based on some phases that are shared among all the approaches:

-

Context definition: The first phase is the definition of the perimeter of the assessment: risk is present at all the levels in an organisation , the aim is to decide where to apply the risk assessment avoiding to consider too many factors, creating in this way a never ending assessment, or exclude something that is directly influencing our scope but that could be excluded if the context is not well defined around our subject of assessment, also known as asset.

-

Risk Identification: Once the context, i.e. the boundaries around our assessment, is defined, it's possible to start understanding the potential risks that could become true during the different stages of life of our asset. In the specific field of IT RIsk at this stage is also possible to identify both the menaces that could generate a risk and the vulnerabilities linked to these menaces on a specific asset. It is recommended, in this phase, to use a worst case approach in order to do not exclude any possible risk.

-

Risk Analysis and Evaluation: it's the last part of the process that is aimed to quantify the risk in a clear and understandable way, usually a ranking that could be based on a description or on a scale of values that implies also the prioritizations of the risk that should be tackled, this output is relevant at all the levels of applications: at strategic level it helps to define and allocate the adeguate budget and resources and evaluate the costs/benefits of each remediation action.

This last phase is crucial because a wrong evaluation can lead to ignore a relevant risk that, becoming real in the future, can critically compromise a project, a process or the whole business of the organisation, on the other hand overestimating a risk can dramatically increase the costs for managing a situation that could never happen or that could have a very limited impact.

The phase of evaluation is strongly linked to the concept of Risk Acceptance Threshold: once a scale of values is determined it's important to define which levels of risk has to be treated, and in which order, and which levels of risk can be skipped in the treatment phase and be recorded in a register, named Risk Register, that contains all the risks for an organisation and that is updated regularly.

The Risk Evaluation can be performed in many different way and methods, there is no perfect methodology for all the situations but it's important to understand which one is adeguate for the resources that could be allocated, for the data that are available to perform the evaluation and for the dimension of the organisation and its process/projects, the common point is to define two main aspects of the risk: the likelihoodand the consequences, combined in different ways to obtain a risk level that is then evaluated on a series of defined levels.

The output of this process is a list of prioritised risks that are the input for the Risk Treatment.

The main distinction in the methodologies that could be applied is between qualitative analysis and quantitative analysis, with some hybrid and alternative approaches, as described in the next paragraphs:

Qualitative Analisys

In qualitative analysis, the impact and likelihood of potential consequences are presented and described in detail using scales and examples that are usually adjusted to suit the context and dimension of the target of the analysis, this methodology is very useful as an initial assessment to identify risks which will be the subject of further, detailed analysis, more over is possible to apply it where non-tangible aspects of risk are to be considered such as reputation and trust of the customers/partners or when there is a lack of adequate information and numerical data or resources necessary for a statistically acceptable quantitative approach.

Qualitative risk analysis evaluates the likelihood and the impact of potential risks on a pre-defined scale, defined from low to high with many intermediate levels: as a method of analysis it's subjective because it's generally carried by individuals with a responsibility on the asset evaluated and it's based on their personal perception of the risk probability and its consequences and their knowledge about possible threats and vulnerabilities. The purpose of such analysis is to increase the awareness of the different stakeholders on the most probable and with severe consequences risks, identify the weak points of a process or a project, then plan and implement a response to reduce the costs of this eventual situation on the organisation.

Probability and Impact Matrix

The Likelihood and Impact Matrix is one the most commonly used qualitative risk assessment method. It is based on the two components of risk: probability or likelihood of occurrence and the impact or consequence if it occurs. The matrix is a two-dimensional grid that maps the likelihood of the risks occurrence and their effect on the objectives. The risk score, often referred to as risk level or the degree of risk, is calculated by multiplying the two axes of the matrix.

Risk = Likelihood × Impact

The risk level is calculated from these two values and is generally defined from low to high with intermediate values. The ratings for likelihood and impact are based on gathered informations from the risk owner, usually through interviews and the use of supporting tools such as questionaries or checklists. These scores must be customized by each organisation and be specific for the single project or activity scope of the risk assessment, The result of each risk assessment, based on the defined metrics, are used to prioritize the risk response and plan a strategy defining which kind of risk has to be addressed first and in which order, in its simpliest form the result of the moltiplication is the highest value between likelihood and impact.

-

Low impact and Low Likelihood: The risks that are characterized as low have both a low rating of likelihood and impact and usually they do not undergo to any specific treatment but are included in the risk register and monitored for future changes or evolutions.

-

High impact and Low Likelihood: Risks with high impact but low likelihood most of the time are categorized as moderate risk and are strictly related do the organisation approach and defined thresholds, these events are similar to rare catastrophes and they These events rarely occur, defined as rare catastrophes, their past occurrences can’t be tracked as lack of data so their likelihood has to be evaluated subjectively and adequate measures has to be taken to tackle the problem.

-

Low impact and High Likelihood: Risks with low impact but high likelihood most of the time are categorized as moderate risk and are strictly related do the organisation approach and defined thresholds, these events are configured as minor risks that, combined together and with an high likelihood could lead to a higher risk, but usually, when alone, they constitute a low risk.

-

High impact and High Likelihood: The risks that are characterized as high risks have both a high impact and likelihood. A risk of this level can be considered as a threat to the objective and should be addressed with high priority and response that could lead to risk mitigation or stopping the whole project or initiative if the risk is too much.

In general the estimation of Likelihood and Impact is based only on the exprience of the evaluation and of his/her knowlenge of the context and all its factors, to reduce this unpredictability and help both the assesser and the risk owner the two main factors usually are defined with a breakdown of their levels.

Likelihood factors: a typical way of describing the likelihood in a more detailed scale is to indicate the estimated occurcence of an event for a defined slot of time, an example could be:

| Likelihood level | Occurrences |

|---|---|

| Very Likely | More than 10 times per year |

| Likely | 9–5 times per year |

| Possible | 4–2 times per year |

| Unlikely | Once per year or less |

| Very Unlikely | Once every 2–3 years |

This example has been chosen of to indicate that most of the times the lilelihoodd levels are decided in a arbitrary way, the good practices says that those values should be extracted from the statistical knowledge or analisys of similar situation in the past, however this can be done only on big and known events and not on most new or completely unknown situation, in this case the likelihood scale is again a pure guess and has the risk to flatten the levels creating an inconsistent final risk value.

Other time slot divisions could be applied according to the scope of time but the result will be more or less the same, in general this is the simple way to estimate the likelihood without and ground data.

Another approach, proposed by the OWASP in its methodologies and very adaptable to the specific field of Cyber Risk is define in more detail the two main aspect of risk, i.e. Threat agent and Vulnerability factors, in this way to each of the different factors a numeric vaule from 0 to 9 is associated, in this way the single value for each one of the factors contribute to a quantitative measurement

An example applied to Cyber Risk is using the following factors: Skill level, i.e. the tecnical skills of a group of threat agents that would like to perform an attack; the motivation to perform the attack; the opportunity are resources available to exploit a vulnerability and the dimension of this threat agent.

Each of the factors, evaluated on a scale from 0 to 9 can lead to an average value that is associated to a likelihood level, e.g. low medium or high.

In the same way the Impact can have a basic and intuitive scale, usually based on financial aspect such as:

| Impact level | Loss |

|---|---|

| Extremely low | 0–10k € |

| Low | 10k–100k € |

| Moderate | 100k–500k € |

| Serious | 500k–1M € |

| Extreme | Above 1M € |

This mere example is to show a simple way of estimating the impact that takes only in consideration the economical factor in an intuitive way, this usually requires a refinement in terms of considering different factors and domains of impact, such as the division between technical impacts, strongly related to the IT and Cybersecurity field, and Business Impact:

-

The Technical impact, mainly referred to data, systems or functions is based on 3 main factors, aka the CIA Triad: confidentiality, i.e. the requisite that all the data should be accessible only to the persons with the right to access or modify them and, on the other way, all the persons should be allowed to access to all data that they use; integrity, that is the need to maintain the consistency, accuracy, and trustworthiness of data over its entire life cycle and then availability, i.e. the property that makes the data ready to be accessed or used at any time according to its necessity; to those 3 usually another factor named traceability or accountability that consider if the threat agents' is traced in its activities and to which extent.

-

The Business impact stays at an higher level compared to the technical impact and is mainly applied at executive level, the business impact referres to how a generic risk, if becomes real, can slow down or disrupt one or more business process, or, in the worts case, the whole organisation, in this sense is strictly related to an estimated monetary value, being an evaluation made at an higher level and not based on atomic or restricted assets it's not easy to measure, generally it's divided in 4 areas: financial damage, the mere econimomical loss in terms of lost of revenues or fines; reputation damage, in terms of loss of trust from customers, business partners and in general in the public opinion and media; loss of compliance regarding laws, regulations or standards then finally privacy violation, i.e. the amount and sensitiveness of data disclosured.

It is possible to include both Technical and Business Impact during the evaluation of this factor, according to the context and mainly to the asset one or the other domain can weight more than the other or have a balanced relevance, after evaluating all the relevant factors in the two areas is possible to obtain a numeric value that can be classified according to a pre-defined scale that is the input for the risk prioritization and treatment phase

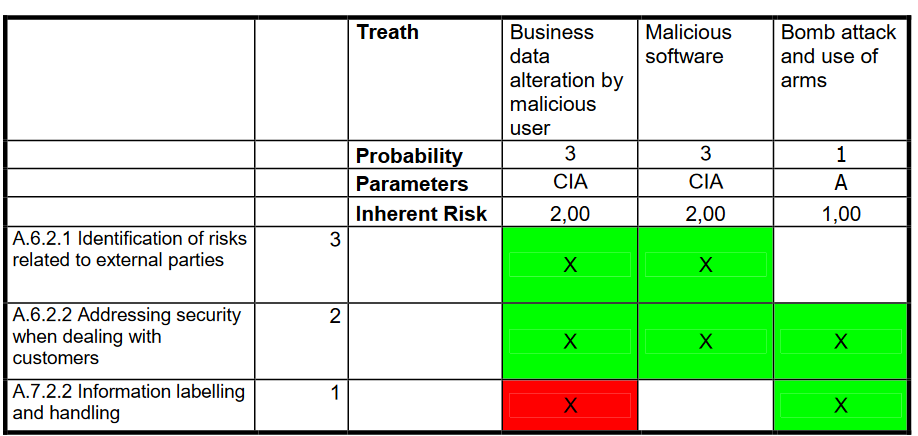

VERA - Very Easy Risk Assessment

Another qualitative methodology is the one named (Very Easy Risk Assessment), introduced by Cesare Gallotti, it has as aim to simplify the analisys and evalution phase, without loosing accuracy, focusing more on the implementation and improvement of security controls instead of the analitical part, this qualitative methodology is based on the assumption that the pure quantitative analisys is not accurate in most of its implementation due to the impossibility to exactly define all the numeric factors because the numeric and quantitative methodologies are useful only in specific situations and within a limited perimeter.

The VERA methodology is mainly focused on assessing the services and not the single assets, a strictly asset based assessment requires the so called CMDB (Configuration management database), a database that collects all the assets of an organisation with a detailed description and classification, the informations conteined in the CMDB are an input for the Risk Assessment but this database, also for mid-size enterprises, is not most of the time hard to mantain and update and could bring wrong or outdated factors to the evaluation phase, for this reason the VERA methodology is focused on services or processes and not on assets, except in case a more context-restricted analisys is required.

The informations necessary that will be input of the evaluation are collected with the so called "collaborative" approach: an expert facilitator with the help of the risk owner and collaborators with a deep knowledge of business processes and technological aspects are gathered to perform this activity.

The first phase of the analisys is, as motivated before, the service or process: each one of them has to be defined indicating its features, its management and utilization, its phisical boundaries and its technologies.

For the impact evaluation every service is evaulated in terms of damage for the organisation according to the CIA parameters, on a scale from 1 (low) to 3 (high):

| Service | Confidentiality | Integrity | Availability |

|---|---|---|---|

| Service 1 | 1 | 3 | 2 |

| Service 2 | 2 | 1 | 3 |

The following step is the Threat Evaluation, each service is assessed upon the threats that could menace it and considering their action on the CIA parameters, the baseline for the list of threats is composed by 41 entries mutuated by the NIST SP800-30 but can be easily extended or adapted to the specific context: for each threat is indicated a likelihood between 1 (low) and 3 (high), the value calculated for each threat, weighted with the impact calculated in the previous phase is called "Inherent Risk".

This methodology also assess the countermeasures, named Security Controls, already in place in the organisation that are aimed to reduce the possibile risks, each of these controls are assessed on a scale from 1 to 3, measuring its efficacy and it's baseline is composed by 133 security controls extracted by the ISO27001 Standard.

The risk calculation is made comparing the Inerhent Risk for each threat with the value attributed to the countermeasure applied by the Security Control: if the the countermeasure evaluation is higher that the associated risk we have a situation where the risk can be considered threated or adeguately covered (green box in the grid), otherwise the risk is not adeguately covered and requires a form of risk treatment (red box in the grid).

The following matrix is to plan the risk treatment for each one of the risks not covered by adeguate countermeasures, the key point of this methodology is the introduction of the security controls that, evaluated with similar parameters with the risks, are also giving an insight of what the organisation is currenly doing and how effective it is against a specific risk.

Quantitative Analisys

The quantitative methods implies a series of calculations aimed to measure the risk against another or compare its numerical value to a predefined scale: this methodology, being more accurate, even if requires more time, costs and a high level of knowledge of the context and all the data, allows to take a decision considering the cost/benefit ratio and a more stable baseline for the whole process of risk analisys, the quantitative methods are based on a strong mathematical application including various factors in its analisys, some methods applied or proposed are:

-

FAIR and Monte Carlo Simulation

-

Combined Quantitative Approach

-

Fault Tree Analisys

-

Analytic Hierarchy Proces and Plan Do Check Act for Risk Evaluation

A common problem of the quantitative approaches is their repeatability and applicability in a real life context: all the mathematical methods listed above require the exact knowledge of many factors that are composing the final value of the risk level, the main and exact source of these factors is usually statistic and probabilty that, for some known and documented risks can be estimated, but for other factors or quite new risks, that are also the most dangerous because not compeletly known or where there is no statistical informations, all the values given are a pure guess and there is no way to estimate their accuracy, mining in this way the whole matematical calculations.

FAIR and Monte Carlo Simulation

The FAIR (Factor Analisys of Information Risk) is Risk Assessment methodology that includes a quantitative analisys based on:

-

An ontology and classification of the factors that compose the risk and their relationship to one another, this aspect is relevant because what is most of the time missing in many approached, but also hard to identify and guess with a reasonable accuracy, is the correlation between risks.

-

Methods for measuring the factors that drive risk.

-

A computational engine that derives risk by mathematically simulating the relationships between measured factors (Monte Carlo Analysis).

-

A scenario modeling construct to build and analyze risk scenarios obtained by the simulations.

FAIR Risk Ontology

The starting point is the FAIR Ontology that defines all the components building up the risk, the first level is the classical Loss Event Frequency, named also Likelihood, and Loss Magnitude, a.k.a. Impact, the model evolves the classical two components in a more detailed tree of factors:

-

The first level factor, the Loss Frequency Event (LEF) is defined like the probable frequency, within a given time-frame, that loss will materialize from a threat-agent’s action, it's a strictly time-framed factor because without a defined timing every event is possible, for example considering 1 year as time as time frame and indicating the occurrencies.

-

Threat Event Frequency, that is the probable frequency, within a given time-frame, that threat agents will act in a manner that may result in loss, so in this way the Loss Event Frequency (LEF) describes more accurately the probable frequency, within a given time-frame, that loss will materialize from a threat-agent’s action; TEF is expressed as a distribution using annualized values.

-

Contact Frequency (CF) is the probable frequency, within a given time-frame, that threat agents will come into contact with assets, the contact can be physical or logical and expressed expressed as annualized distribution.

-

Probability of Action (PoA), i.e. th probability that a human threat agent will act upon an asset once contact has occurred, the driver for action could be the perceived value of the act from the threat agent’s perspective, the required efforts and the hypotetical outcome and the perceived risk to the threat action.

-

Vulnerability, that is the probability that a threat agent’s actions will result in loss, usually described as a distribution with percentages.

-

Threat Capability (TCap), that contributes to the definition of Vulnerability, is the capability of a threat agent, measuring the hypotetical skills and resources and expressing them in percentiles.

-

Difficulty, that is the level of difficulty that a threat agent must overcome and is composed by the security controls that are against the action of a threat agent.

-

In the other branch of the tree we have the following factors:

-

Loss Magnitude, that is the probable magnitude of primary and secondary loss resulting from an event, the primary impact is the one direct on the risk owners and business owners, the secondary is on all the stakeholders that are connected with the primary ones and will have an indirect partner, e.g. business partners, customers etc.

-

The Primary Loss Magnitude is the primary stakeholder loss that materializes directly as a result of the event, they could be loss of productivity, response to the loss event, replacement of lost assets, competitive advantage, fines and reputation.

-

The Secondary Risk is the primary stakeholder loss-exposure that exists due to the potential for secondary stakeholder reactions to primary event, i.e. the fallout from the primary eventm it's composed by:

-

Secondary Loss Event Frequency (SLEF), that is the percentage of primary events that have secondary effects.

-

Secondary Loss Magnitude that is the loss associated with secondary stakeholder reactions.

All those factors are evaluated thanks to the estimation of experts and, instead of the classical matrix of values, are formalized into distributions, those values are then the input for the quantitative engine based on Monte Carlo Simulations.

Monte Carlo simulation is a method for analyzing data that has significant uncertainty. Monte Carlo simulation perform repeated random sampling to obtain numerical results, the output of Monte Carlo simulation used in risk analysis is shown as probability distributions. The primary advantage of using Monte Carlo simulations in risk analysis is the ability of the method to perform thousands of calculations on random samples, allowing risk analysts to create a more accurate and defensible depiction of probability given the uncertainty of the inputs.

The final ouput of the automatic tool applying Monte Carlo Simulation is a distribution of Risk based on the abovementioned factors defined as distributions, the numerical indication of risk is the input for the risk prioritization and treatment.

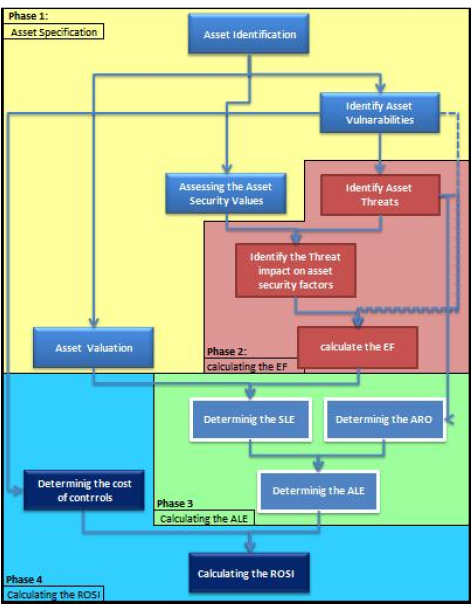

Combined quantitative approach

{width=”4.875in” height=”6.241666666666666in”}

{width=”4.875in” height=”6.241666666666666in”}

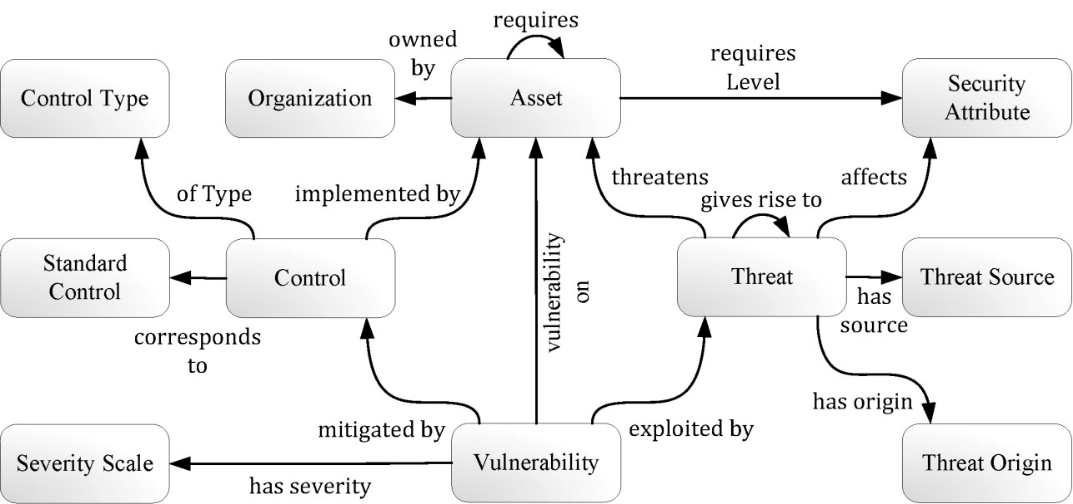

A quantitative approach, coming from the academic world but based on practical tools is the one described in "A new quantitative approach for information security risk assessment" of A.Asoseh and B.Dehmoubed and A.Khani, it is based on Callio Secura 17799 that is a simple but effective tool for implementing an information security management system that automatize the process described in the ISO/IEC 27001:2005 standard and has a module for risk assessment, this module is a very complete model for assessing the Exposure Factor (EF) of a risk but without any calculation on the Business Impact; the other component is the Microsoft Risk Assessment model that starting from the Exposure Factor ends with the calculation of ROSI (Return In Security Investment).

The approach is a serie of 4 steps:

-

Asset Specification: where the monetary value of the asset, its vulnerabilities and security factors assessed on CIA triad.

-

EF Calculation: where the asset threats are ranked on a scale from 0 (not applicable) to 5 (very high), then each threat is associated with the security factors that is breaking: the EF is calculated like product of vulnerability values moltiplicated by the threat values and the CIA triad.

-

ALE Calculation: ALE is the the total amount of money that the organisation will lose in one year if nothing is done to mitigate the risk and is composed by the SLE, the single amount of money lost for a single risk and the ARO, i.e. the number of times that you reasonably expect the threat to occur during one year.

-

ROSI Calculation: ROSI is calculated like the difference between the ALE before applying any countermeasure, the ALE after the remediation and the cost of controls and remediations applied.

The ROSI is calculated on every asset, with a positive ROSI it means that the remediations applied are actually reducing the monetary cost in case of risk becoming real for that asset, otherwise either the countermeasures are not adeguate or maybe too expensie, summing all the ROSI will give the general ROSI for the whole organisation.

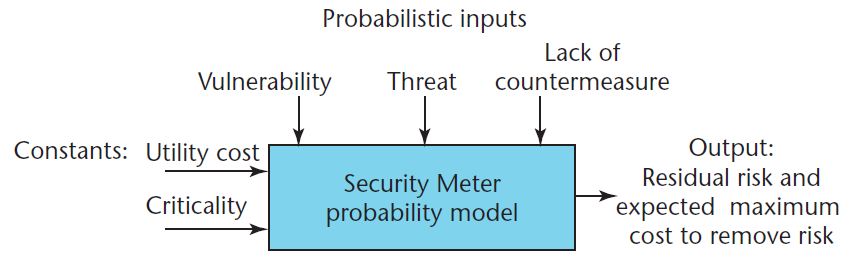

Fault Tree Analisys

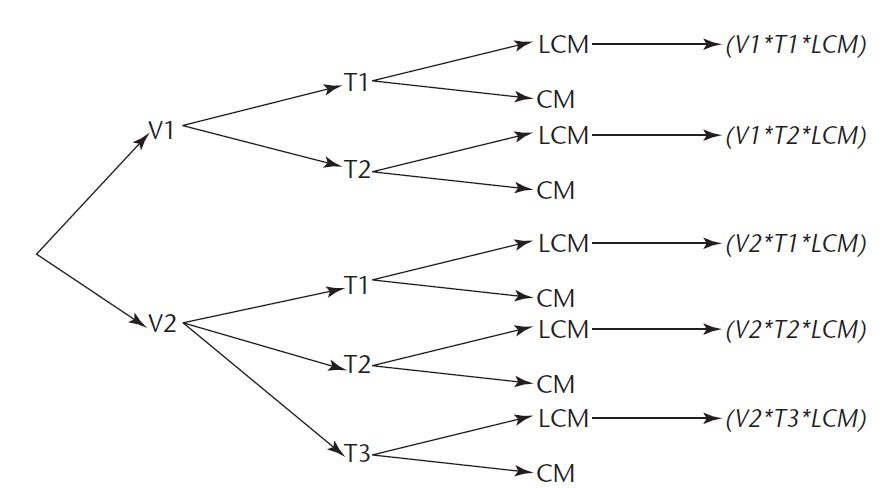

In the article "Security Meter: A Practical Decision-Tree Model to Quantify Risk" of M. Sahinoglu is presented a quantitative technique with an updated repository on vulnerabilities, threats, and countermeasures to calculate risk: the model is based on a quantitative analisys that divided the input of in two main categories: probabilistic inputs, such as the vulnerability value (range from 0 to1.0 or expressed in percentage), each vulnerability has one or more threats that is the the probability of the exploitation of some vulnerability or weakness within a specific timeframe, each threat has a countermeasure CM that ranges between 0 and 1 and a counterpart that is named Lack of Countermeasure (LCM), scoring the complementary value of the countermeasure; the deterministic input is the system criticality, another constant that indicates the degree of how critical or disruptive the system is in the eventof entire loss, is taken to be a single value corresponding to all vulnerabilities with a value ranging from 0 to 1, then there is the Utility Cost, i.e. the expected loss in monetary units for the particular system if it is completely destroyed and can’t be utilized anymore.

The first step is to calculate the Residual Risk as:

Residual risk = Vulnerability * Threat * LCM

this value is calculated for all the vulnerabilities then it's used to calculate the Final Risk as:

Final risk = Residual risk * Criticality

From this value the Expected cost of loss is calculated:

ECL = Final risk * Cost.

In this way is possible to prioritize the risks according to their costs and the risk treatment realzied at that cost on that specific risk, the same model can be applied In the event that purely quantitative values are not available for each attribute in the decision-tree diagram, all we have are qualitative adjectives such as H(high), M (medium), or L(low) the approach can be modified applying the the use the probabilities of H, M, and L—that is, P(H), P(M) and P(L), in case of hybrid data the same model can contain branches with pure quantitative measures and others with qualitative ones (H,L,M) that, under some hypotesis and probability rules, can still work to obtain a consistent model for risk evaluation, all the results, being based on probabilities, can be tested with a Monte Carlo Simulation Engine to estimate the accuracy of the model, for a detailed description is possibile to consult the full article in the references.

Analytic Hierarchy Process and Plan Do Check Act for Risk Evaluation

Analytic Hierarchy Process (AHP) is a multi-objective decision analysis method which had been proposed by T.L.Saaty in the mid-1970s, and the method often is used for multiple optimal selections programs or risk evaluation decision-making. AHP is an analysis method of the combination of qualitative and quantitative and the combination of subjective and objective analisys, and the method possesses systematization and hierarchy to formalize a weight for the risk factors, those combined with the Plan-Do-Check-Act are organized in a Risk Evaluation method presented in the article "The Research and Application of the Risk Evaluation and Management of Information Security Based on AHP Method and PDCA Method" of M. Meng that can be consulted in the references for an example of application.

Alternative Approaches

Alternative approaches are hybrid approaches or other ways of tackling indirectly the risk that can be combined with the more classical ones, their aim is to cover the areas that one of the standard approach cannot tackle due to their intrinsecal weaknesses: nowadays no one of the existing methodologies, alone, is able to fully cover the topic of risk assessment in most of the real complex cases.

-

Semi-Quantitative

-

Baseline Requirements

-

Vulnerability Management:

Semi-Quantitative

In semi-quantitative analysis the objective is to try to assign some values to the scales used in the qualitative assessment. These values are usually indicative and not real, which is the prerequisite of the quantitative approach. Therefore, as the value allocated to each scale is not an accurate representation of the actual magnitude of impact or likelihood, the numbers used must only be combined using a formula that recognizes the limitations or assumptions made in the description of the scales used. It should be also mentioned that the use of semi-quantitative analysis may lead to various inconsistencies due to the fact that the numbers chosen may not properly reflect analogies between risks, particularly when either consequences or likelihood are extreme.

Baseline Requirements

The aim of this approach is not to estimate risk, prioritize it and than apply countermeasures but, as a reverse process, the countermeasures are applied as largely as possible, with a positive cost/benefit ratio, in this perspective many solutions, e.g. firewalls, IPS/DS, antivirus etc are applied within an organisation indipendently from the current risk but affecting its theoric values indirectly.

Vulnerability Management

Considering the fact that a risk can become real if there are a threat and a vulnerability, this approach works to reduce or eliminate the known or possible vulnerabilities in the systems, reducing or removing indirectly all the associated risks.\

Risk treatment

The purpose of risk treatment is to determine how to tackle the risk identified, quantified and classified in the Risk Assessment phase.

The main option for treating a risk are:

-

to avoid the risk by deciding to stop or postpone an activity or project or business (Risk Elimination)

-

to modify the likelihood and/or the consequences of the risk trying to reduce or eliminate the likelihood or losses of the negative outcomes (Risk Reduction)

-

to share the risk with other parties facing the same risk, such as insurance arrangements and organisational structures such as partnerships and joint ventures can be used to spread responsibility and liability (Risk Transfer or Sharing)

-

to retain the risk or its residual risks (Risk Retention)

The basic idea that can help to take a decision is the cost/benefit ratio: the cost of managing a risk in one of the each options has to be compared with the benefits expected, both direct and indirect, and it's also possible to combine two or more options to tackle a specifing risk.

Risk Reduction is a series of countermeasures, in the domain of Cyber Risk for example, that are aimed to reduce the likelihood or consequences of a cyber attack over the owned systems and the data contained in them, examples could be a campaign of Vulnerability Assessments and Penetration Tests in order to verify the likelihood of a cyber attack aimed on the website of an organisation or, for example, encrypting the databases containing sensitive data can reduce the impact of a data loss. Considering the complexity system of an organisation with strong digitalization and complex business processes the Risk Reduction is a very structured activity that implies a defined treatment plan that usually includes:

-

proposed actions, priorities or time plans,

-

resources and budget requirements,

-

roles and responsibilities of all parties involved in the proposed actions,

-

performance and efficacy measurement,

-

reporting and monitoring requirements

The plan is again another sub-cycle of the Risk Management process and leads, at the end of every iteration, to obtain a residual risk that has to be tracked and evaualed again, leading according to the new evaluation to another cycle of Risk Treatment such as implementing other technical countermeasures or simply accepting the residual risk and keep it tracked for its future evolutions. The Risk Acceptance is usually definied at top hierarchical position and implies that the management is well aware of the existence of this residual risk, of its related impacts and that is considered as accepted as it is.

Risk Transfer is another way of treating the risk, in many other fields is a commonly applied solution, e.g. the car insurance that each driver has or insurance on the new house: the idea is that a certain amount is paid regularly in order to have another entity that cover a much larger amount of loss in case that a risk becomes real: in the field of Cyber risk the so called Cyberinsurance are an instrument that are starting to be applied, although with many limitations, some examples and analisys of Cyberinsurance are offered in "Cyberinsurance in IT Security Management" of W.Baer and A.Parkinson or "Mitigating Risk with Cyberinsurance" of Per Håkon Meland, Inger Anne Tøndel, and Bjørnar Solhaug (see references), the main aspect is that while a "normal" risk such as a fire, theft or any other physical action is easily detected and remediate, for Cyberattacks the time between the action, its detection, understanding the impact and then react could be dramatically longer and insurances, to have an adeguate coverage, should include all those phases that are all generating a loss, moreover it's also not easy to define the liabilityes in terms of a Cyberattack.

Monitoring and reporting

A relevant statement is that the whole process of risk management is not a a series of activities but a cycle that includes a monitor and review sub-process that ensure that all the analysis performed and the consequent action plans are updated, monitored in their phases and up-to-date with the nowadays fast changing environment, especially in the IT and Cyber domain where new threats or vulnerabilities are emerging almost on daily base, affecting in this way both the quantity of risks known and also their relevance. The first iteration of the cycle has to be documented and create at the end a collection of all the risks emerged (the so called Risk Register), all the relative treatment options chosen with their action plans and countermeasures. The recording is also relevant for creating a baseline that, being historicized, can enable the organisation to mark the trends and adjust to above all process according to the general evolution of the risks, the context and the countermeasures available. It is very important also that all the documents and report emerged by the Risk Assessment are classified as critical and confidential informations, they contain in fact informations that could help an attacker, both internal and external, to exploit one or more vulnerabilities emerged during the process.

Communicating the Risk

Risk Management has a crucial aim communicating and creating awareness of the relevance of the Risk Management process within an organisation, involving in the process all the relevant stakeholders and creating a common base for understanding the Risk at all levels and the need to embed its management in all the processes of the organisation and at decision making level.

Moreover a clear understanding of the Risk, his aim of protecting value at all levels and its possible consequences in terms of monetary loss are crucial to give adequate attention to the resources allocation for the general Risk Management process and keep the commitment of the top management in all its phases.

Moreover a shared knowledge of it help to reduce the risk perception solely based on personal judgment, creating a big variety of differences in values, assumptions, concepts, an priorities, the view of the stakeholder in fact, if not adequately aware of the topic can imply a wrong identification, recording and managing at decision-making level, mining in its fundamental part the whole designed Risk management process.\

Automation - Common practices and opportunities

Risk Management Software is a category of tools aimed to automatize the process of Risk Management, ideally covering all the steps idenfied by the Standard ISO27001 as the Plan-Do-Check-Act cycle.

The tool should support to identify the risks within an organization, take appropriate measures to mitigate such situations, monitor the implementation of those measures and offer the chance to present reports and dashboards aimed to review the whole process.

The automatization of the Risk Management process is defined by Gartner as “a set of practices and processes supported by a risk-aware culture and enabling technologies that improve decision making and performance through an integrated view of how well an organization manages its unique set of risks”.

Risk management software helps organizations to measure the level of risk in ongoing projects and processes, considering also the asset interconnections and the growing complexity of the IT environment and then generate meaningful insights as well as an action plan, leveraging the automation to grab the growing complexity within organizations and IT systems.

Automation in Risk Management is applied in an increasing number of organizations and it's almost mandatory for the large ones, in fact the only chance to keep track of all the Processes and Assets, their interconnections, the current regulations and have a statistically valid methodology for calculating the Risk Level.

The risk monitoring and reviewing phase, made of a large number of tasks, deadlines and owners, is most of the time realized thanks to automated systems such as GRC tools that are largely applied to keep the asset database, the CMDB and the risk register, enabling the organization to track the changes on it and have a complete view of its own structure.

Policy Tools

In the article "From risk analysis to effective security management: towards an automated approach" of Vassilis Tsoumas and Theodore Tryfonas a proposal of automation is presented to its application in the extraction of security controls and requirements directly from policies of high level documents: the authors in fact underline that all the policies, guidelines and documents that are the baseline for structuring all the Risk Management are subject to misinterpretation, biases and related with the experience of the reader and interpreter, to avoid this aleatory part and, in a further stage, transform directly the policies in punctual security controls or technical configurations, an approach based on the knowledge extraction from natural text is defined in order to make this translation possible.

The paper describes the requirements for a software tool that could assist in the transition from high-level security requirements to a formal, well-defined policy language that could be the baseline for technical configurations over the assets of an organization, for an example of this format see the SCAP paragraph.

Risk Management Automation Tools

The Risk Management tools are based theoretically on the Security Relationship Model as formalized by NIST, every tool or platform is implementing either part of the schema, the whole schema or extending it to other processes and models that are interacting with the IS within an organization: the larger coverage of the model is included in a software the more complex it is in its funcionality and logic; a software that pushes too much in one of the two directions, simple and easy to use or complex and comprehensive, can lead to exclude many relevant factors in the first case or to include too many variables that are not known but just approximated, leading in both cases to an analisys far from the reality with all the bad effects on the processes of the organization.

The basic workflow that a Risk Management Tool should implement is:

-

-

Mitigate risks and address threats: The sole purpose of risk management software is to identify threats and address those within a defined deadline. Unaddressed issues can result in project failure, data braches as well as events generating negative reputation or regulatory issues to the company.

The software should ensure that projects, processes and in general all asset management follow specific guidelines, often included in standards or best practices, allowing also the management to act as soon as a situation not compliant with the guidelines is detect and make corrections to mitigate the risk. -

Foster Risk Awareness: This family of software allow to generate and update a risk register, usually regulated by standards in its structure, which includes in-depth details about the risk, its indicators, impact, and the actions required to avoid/minimize it and their track of changes. A database helps to extract and present the evolution of risk within an organization and create awareness employees and management on the risks, the impact, and the preventive actions to be taken. This helps in creating a workforce that’s more risk aware and trained to operate systematically to reduce it.

-

Risk prioritization: The core of the software assesses the risk and assigns relevant scores to each risk, allowing the management to prioritize risks and assign adequate resources to resolve the issue and ensures that the impact is minimalized.

Moreover the automation can lead great benefits in the following aspects:

-

Risk Quantification and Analisys

One of the core activity that can benefit from the automation is the Risk Quantification and Analisys, in its most simple and qualitative forms that activity is performed by a simple numerical calculation of two factors, however, due to rise of the level of complexity within an organization, the factors to be considered, the sub-factors composing the main values of Impact and Likelihood, the frequent changes, especially in the technological field, are requiring a calculation that, to be accurate, requires a computation that can be performed only by an automatized tool. Moreover the most accurate methods, such as some of the ones presented in the dedicated paragraph, are using statistical analysis on a large scale, simulations and the chance to offer predictive analysis and “what-if” scenarios: all those features are based on mathematical models and frequent iterative calculations that are relying a large amount of samples in order to be accurate, in this field more than many others the automation can also be crucial for following up with the frequent emerging of new threats and including them in the iterative process of Risk Assessment.

- Risk and Control Documentation/Assessment

The Risk Statements and the relative Security Controls needs to be documented and stored, giving also the chance to export and present them to relevant stakeholders, both internal and external, allowing also to perform the Risk Assessment at strategic, operational and technical level, to reach this level of service some features have been identified by Gartner as required such as a general Risk Framework approach, some libriaries of requisites or controls, reflecting standars or risk taxonomy, a function to obtain risk indicators (Key Risk Indicator) and also the chance to include regulatory and compliance requirements, offering also a mapping between policies and security controls.

- Incident Management

Including Incident Management in a tool for Risk Management is crucial for making a match between risks identified during the analisys and what is really happening within the organization, i.e. the risks that are becoming real and are creating a concrete and measurable impact on the organization, these two components can support each other to improve the Risk Management providing real data to estimate future risks and adjust the prioritization of the ones already assessed, on the other direction the risk management results can contribute to identify part of the root causes that led to an incident.

- Risk Mitigation Action Planning

Risk Mitigation measures have to be adopted in case the Risk Assessment produces Risks above the Risk Appetite tresholds: the approval, planning and monitoring of this plan require a committment from the management of the organization, a tool that is able to organize the plan and make it available for extractions can be used to facilitate its management and communication within the organization.

- KRI Monitoring/Reporting

The tool should enable the definition of Key Risk Indicators in order to offer a complete view of the risk within the organization and offer an aggregate indicator that is able to describe the risk at management level, offer a way to monitor trends with a different gralularity, for example identifiying in which area the risk increased or decreased in a significant way in a specific area or business process.

The cost of risk management softwares

These informations have been extracted by Capterra website that offers an overview of many products and their pricing: the products can be divided into three pricing tiers based on their starting price-per year.

Price ranges:

-

$70 - $400, 25th percentile of the whole sample

-

$400 - $10.000 - 75th percentile of the whole sample

-

$10.000+ as the 100th percentile and maximum values found

The above list, not comprehensive of all the products that are not giving public pricing policies, summarizes pricing for the base plans of many products; many enterprise products however are not offering only Risk Management features but also many others such as Vulnerability Management, generic GRC Management, Change Management etc so their prices are usually not affordables by SMEs because they come in a bundle product and deployment that is far beyond their capacities and needs.

Opportunities and Challenges behind automation

The opportunities behind the automation of the Risk Management process are related with the chance to react with adeguate resources the exponential growth in term of complexity of the organizations: the IT enviroment, the threats and vulnerabilities known, the devices used and the changing regulatory system created an environment which is not easy to understand relying only on manual procedures or knowledge based on personal perception of experience, in these terms the use of automatical tools can help to store and historicize data, analyze patterns and make statistical analisys and predictions, i.e. the core of Risk Assessment tast.

The same issue of having a very complex environment can be tackled also integrating various and heterogenous sources of data and include them in the Risk Management process: the informations gathered from IDS, SIEM, CyberThreat Intelligence, Vulnerability Assessment etc can be leveraged to perform an analisys based on real data for that specific customer, moreover having an adeguate base of customers from a specific market segment is possible to tune the future analisys and predictions retrieving them from data collected directly from real events, incidents and threats identified, increasing in this way the accuracy of the whole process and its cost-effectiveness ratio.

Another aspect that could benefit from Automation is the matching between the application of Security Controls in the technological field: the Controls listed in the remediation plan for risk reduction are mainly divided in 2 fields: the ones that has to be applied on the human factor, such as processes, trainings and behaviours, and the technological ones, such as patching, system configurations etc; the second kind of Security Controls could be directly implemented in platform with a high level of complexity and integration with other systems if a way of codifying them into configuration parameters or patching activities.

SCAP

An example could be a Risk Management platform that can gather directly the configurations of all the technological assets listed in the Asset Library, match them with a list of Security Requisites to find known vulnerabilities and in this way calculating the risk over the technological assets diretly gathering data from them, then as next step decide the Security Controls to be applied on the specific assets and the platform could transform them into configurations that are applied directly on the assets, giving also the chance to check their correct application in a following phase.

To ebable this process and leverage its automation is necessary to define a standard of configuration that is common to different vendors and devicesand that can be understood by a third-part platform and transform Security Controls.

What is required is a way to transform requisites written in Natural Language into technological assets configuration, to perform this activity the SCAP (Security Content Automation Protocol) has been developed and part of implementation is still work in progress: SCAP is a multi-purpose framework of specifications that supports automated configuration, vulnerability and patch checking, technical control compliance activities and security measurement.

SCAP is composed by a series of protocols enabling the automation of parts of the whole Information Security Risk Management process:

-

Common Platform Enumeration (CPE): nomenclature and dictionary of product names and versions.

-

Common Configuration Enumeration (CCE): Nomenclature and dictionary of system configuration issues.

-

Common Vulnerabilities and Exposures (CVE): Nomenclature and dictionary of security-related software flaws.

-

Common Vulnerability Scoring System (CVSS): Specification for measuring the relative severity of software flaw vulnerabilities.

-

Extensible Configuration Checklist Description Format (XCCDF): Language for specifying checklists and reporting checklist results.

-

Open Vulnerability and Assessment Language (OVAL): Language for specifying low-level testing procedures used by checklists.

The pre-requisite for implementing this automation is to identify which of the security controls can be automatized, in the article "Automation Possibilities in Information Security Management" of R.Montesino is presented an example of a subset of controls that can be automatized from the ISO27001. a complete examination of the SCAP protocol and its possible application in an integrated platform, moreover for further readings in the article "Information Security Automation: How Far Can We Go?" presents which controls from the two most applied standards, the ISO 27001 and NIST SP800-53, can be automatized and how this could be leveraged in developing security applications that support automation in the operation of information security controls

Remarks

Despite the clear benefits that the adoption of automatic tools could give to all the organizations we should remind that all the tools can be useful to speed up, increase the accuracy and offer better results of a process that has to be performed by humans: the automation in the Risk Management, especially for SMEs, is not only a matter of adopting a tool but mainly aligning the internal organization and processes to the approch proposed by the tool: this change can of course raise user resistance within an organization and could mine the successful adoption of a chosen tool, in order to accomplish and facilitate this change two preliminary activities could be performed:

-

Dedicating time during regularly scheduled governance team meetings to discuss the Risk Automation project, defining its own risks and issues that have been encountered during the operational processes reorganization.

-

Applying best practices for organizational change management, including managing communications and expectations, aligning pre-automation deployment with operations, planning organizational design and creating step-by-step transition plans that specify all the steps for the deployment of rapid, successive waves of automated processes.

In this way, alinging what is already done to the mindset introduced by the tool is possible to raise the general level of quality of the Risk Management within an organization, capitalize the economical investment done in its adoption and become compliant with Standards and Regulations largely accepted in order to remain competitive.

References

-

ISO 31000:2018 — Risk management — guidelines. International Organization for Standardization. https://www.iso.org/standard/65694.html

-

ISO/IEC 27005:2018 — Information security risk management. International Organization for Standardization. https://www.iso.org/standard/75281.html

-

The Open Group — FAIR (Factor Analysis of Information Risk) Guide. The Open Group. https://publications.opengroup.org/c13g

-

The Open Group — Requirements for Risk Assessment Methodologies. The Open Group. https://publications.opengroup.org/g081

-

NIST Special Publication 800-30 Revision 1 — Guide for Conducting Risk Assessments. National Institute of Standards and Technology (NIST). https://csrc.nist.gov/publications/detail/sp/800-30/rev-1/final

-

ENISA — Risk Management: Principles and Inventories for Risk Management, Risk Assessment Methods and Tools. European Union Agency for Cybersecurity (ENISA). https://www.enisa.europa.eu/publications/risk-management-principles-and-inventories-for-risk-management-risk-assessment-methods-and-tools

-

OWASP Risk Rating Methodology. Open Web Application Security Project (OWASP). https://owasp.org/www-project-risk-rating-methodology/

-

IEEE — Quantitative OpRisk Analysis in Information Security Management (example literature on Monte Carlo / quantitative methods). https://ieeexplore.ieee.org/

-

Solhaug, B., Stolen, K., & Jaatun, M. G. — Mitigating Risk with Cyberinsurance and Other Risk Transfer Approaches (representative literature). https://www.researchgate.net/

-

Gartner — Magic Quadrant for Integrated Risk Management Solutions (reference for market positioning and tools). https://www.gartner.com/

-

SCAP — Security Content Automation Protocol (NIST). https://csrc.nist.gov/projects/security-content-automation-protocol

-

Relevant academic and applied research articles cited in the text (FAIR, AHP/PDCA risk evaluation, automated risk management approaches) include papers accessible via IEEE Xplore and ResearchGate; consult the document body for contextual pointers and the following representative works:

- Liping, Z., Wu, W., & Xue, L. — Research of Information Security Risk Management based on Statistical Learning Theory. IEEE Xplore. https://ieeexplore.ieee.org/

- Sahin, M., et al. — Security Metrics and Decision-Tree Models to Quantify Risk. IEEE Xplore. https://ieeexplore.ieee.org/

- Further reading and tools:

- FAIR Institute — https://www.fairinstitute.org/

- OCTAVE (CERT) — https://resources.sei.cmu.edu/library/subject-areas/octave/

- EBIOS (ANSSI) — https://www.ssi.gouv.fr/en/eng/ebios-risk-manager/

If you would like, I can convert these into a numbered BibTeX bibliography or expand each entry with full authorship and publication details (volume, pages, DOI) for academic citation. Otherwise I can also add direct DOI links where available.